|

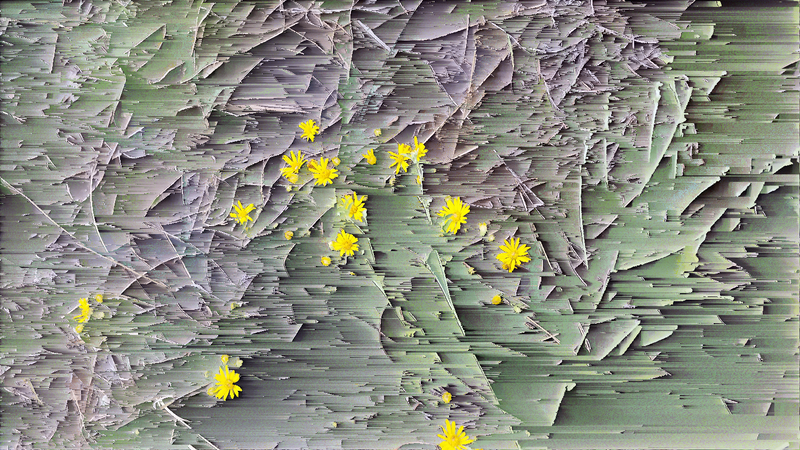

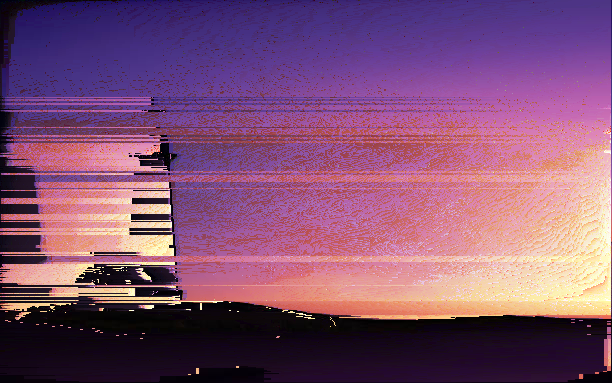

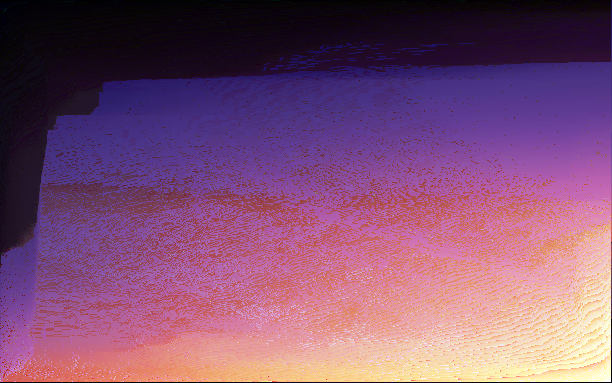

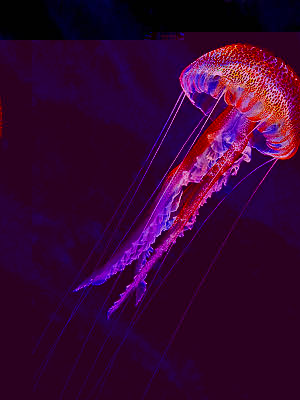

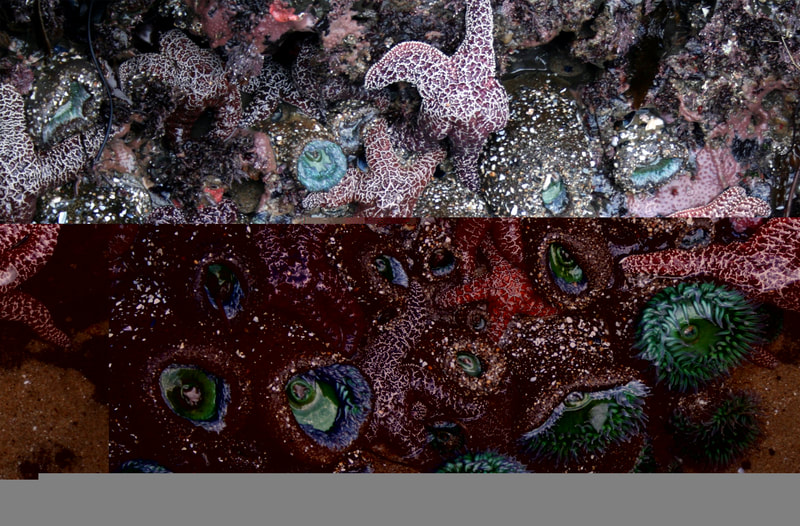

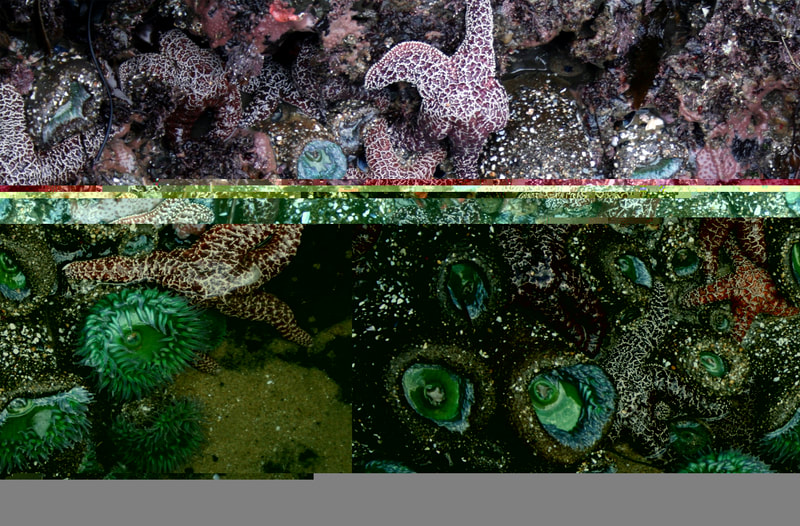

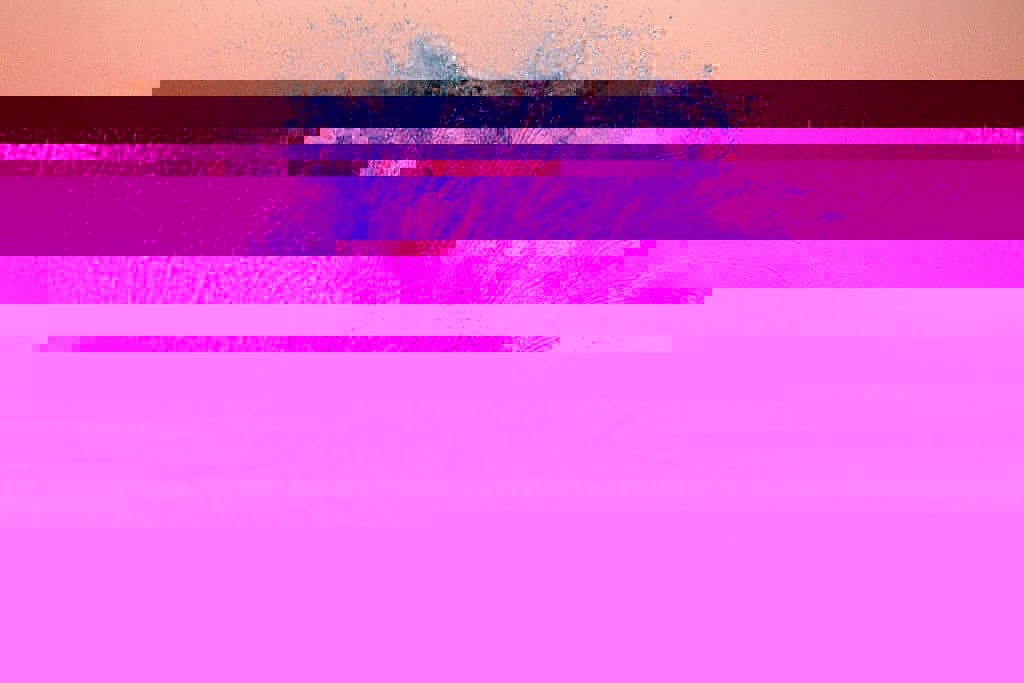

Project 1: Dystopia For Project 1, a collection of 16 stills, 5 GIFs, and a datamosh video were created on the theme of loss. I selected photos from my travel experiences based on objects I thought would be inaccessible or unavailable centuries from now, and I tried to make them look like corrupted data. As a base for my glitches, I mostly used Notepad++ combined with a hex editor because it gave me the best color-changing glitches, but I also used Processing for a couple of pixel sorted stills.

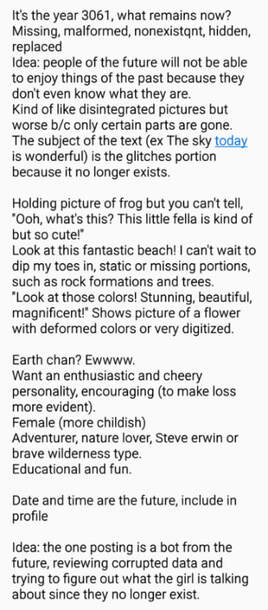

StillsThe first set of stills are the glitch images while the second set of stills are the original, untouched images. Glitch images: Original Images: GIFsThe GIFs were surprisingly difficult to make and required much more time than expected, basically consuming most of my effort for this project. I used slightly different glitch effects to mask what I thought would no longer exist, so I was able to play with a few different visuals. I stuck with black and white edits of various wave effects and put them together using Photoshop's timeline. The most time consuming GIFs were the flower and fence because I had to edit out the entire subject. The GIFs came out well, but I struggled to get through them because of how time consuming they were. For future reference, the easiest images to work with would be the ones that don't need to have certain subjects edited out, such as the image with tea cups. DataMoshThe datamosh video was very fun to make, and it actually turned out better than expected. At first I was worried that the small movements I chose to scrub wouldn't melt well, but they worked suprisingly well. I had to edit the video clip multiple times in Avidemux, but it was fun to look at the results of my work each time I exported an edit. The hardest part was syncing up the datmosh video to the original sound, and I needed to shorten certain scenes so video and voice fit well together. Overall, this glitch outcome is one of my favorite pieces for this project, and I think the datamosh corruption on a clip of axolotls, an endangered species, works well as commentary. The datamosh video used for this project. Twitter bots Two twitter accounts were set up to simulate a call-and-response from one account to the other. The basic premise was that C3_56T, an archive robot in the future, accessed and posted about an adventerous girl's travel account from a thousand years ago. Due to corrupted data, the bot displays its confusion and posts to see if anybody can provide assistance in identifying the glitched images. The first account, Eminy Emily, was made private to feel mysterious and make people unable to see the "past". The second account, C3_56T is public and acts as a window to the past by retrieving "lost data" from the first account. The personality of Eminy Emily was made to be bubbly and charming, and every post has some sort of emoticon attached to it. On the other hand, C3_56T is more stoic and analytic. This difference in personalities further contrasts the difference between past and future, from something more familiar and warm to something more distant and cold. Initially, I planned to post all assets from this project to Eminy Emily's account, but then I realized I didn't need to for the GIFs and datamosh video since they would have to be directly posted to C3_56T's account. Then, I took screenshots of some Eminy Emily posts and posted them to C3_56T's account with confused commentary. As the second part of the Twitter project, I set up Twitter bots to post on both accounts. The automatic messages were made to feel as diverse as possible, so each code had several phrases with multiple parts that provided a variety of mix-and-match options. I tested each phrase's code until the bot for each account seemed varied enough. The set up of the bot is much easier to see and understand in the codes below. I left the posting queue to manual so that I had control over how many times the bot would post. Eminy Emily's bot code focuses solely on text because few people would be able to see it. On the other hand, C3_56T's bot code includes line breaks, images, and reposts of previous tweets, which makes the code a little more complex. For me, the coding was quite fun, although it involved a lot of logical thinking. I enjoyed the process and am curious just how far I can make each automatated tweet feel indivdual. Twitter bot code for Eminy Emily: { "origin": ["#opening# this #color animal#! The colors #color descriptor#.", "#today location# #scenery# is #location descriptor# #happy emoticon#"], "opening":["Take a look at", "I bet you haven't seen anything like", "Check out"], "color animal":["tiger", "frog", "turtle", "snake", "whale", "funky bird", "chameleon", "scaly friend", "parrot", "tropical fish", "butterfly", "chameleon"], "color descriptor":["look so pretty", "are so vibrant", "are really neat", "stand out so much", "are so eyecatching", "are amazingly captivating"], "location":["the Amazon Rain Forest", "the beach", "the mountains", "the Amazon River"], "scenery": ["scenery", "landscape", "view"], "location descriptor": ["breathtaking", "so refreshing", "amazing!", "worth the trek", "so beautiful ~", "to die for!"], "happy emoticon": ["^^", "(^▽^)", "( ˘ ³˘)♥", "o(〃^▽^〃)o"], "today location": ["Today I went to #location#, and #exclamation#, the", "I took a picture of #location# today! The", "Today I went to #location# (^▽^)! The", "Today's picture is from #location#! The", "Check out this picture of #location# I took #happy emoticon# The", "Take a look at this picture of #location#! The"], "exclamation": ["oh boy", "wow", "whew"], "the": ["the"] } Twitter bot code for C3_56T: { "origin": ["Opening #num#", "Reopening #case#"], "loading": ["■■■□□□□□□□ NOW LOADING", "■■■■■□□□□□ NOW LOADING", "■■□□□□□□□ NOW LOADING", "■□□□□□□□□□ NOW LOADING", "■■■■□□□□□□ NOW LOADING", "■■■■■■■■□□ NOW LOADING", "■■■■■■□□□□ NOW LOADING", "■■■■■■■■■□ NOW LOADING", "■■■■■■■□□□ NOW LOADING"], "num": ["Case-1320: @EmilyEminy\n Beginning retrieval process, Log 3025-04.16 \n #loading# \n #imgresponse# \n {img https://placeimg.com/640/480/animals/image.jpg}"], "imgresponse":["Subject unidentifiable.", "Unable to decipher file.", "Too many unknowns.", "File of questionable origins.", "Curious ...", "Unable to identify subject.", "Attempting to decipher ...", "Requires further assistance.", "Requires further assistance.", "Data insufficient ...", "Need assistance to identify subject.", "Unable to find a match with archives.", "Locating subject ...", "Locating subject ...", "...", "No matching data found.", "File exceeds corruption limit.", "File exceeds corruption limit.", "File beyond repair.", "Hmm ...", "Hmm ...", "Attempting to reference archives.", "Unable to process file.", "Insufficient data."], "case": ["Case-1320. \n Beginning retrieval process. \n #loading# \n #linkresponse# \n #twtlink#"], "linkresponse": ["Too many unknowns.", "Attempting to decipher ...", "Requires further assistance.", "Need assistance to identify subject.", "Need assistance to identify subject.", "Unable to identify subject.", "Requires additional insight."], "twtlink": [""] }

0 Comments

Moving on from image form, video glitches were made using Avidemux. By copy-pasting and deleting selections of p-frames and i-frames, I was able to create color changing and melting effects. By using a variety of layer modes and RGB channel changes, a series of fake Photoshop glitches were made into a GIF. I tried out three different shape approaches and enjoy the timing of the frames.

Through more coding-based edits, glitches were made with Processing. Processing seems to be a good program for changes to the original image while retaining color and offering a variety of shapes. The original image is on the far left.

I wanted a concept focused on conservation, and I came up with the idea of something lost and unrecoverable. I thought of using glitched images to mask organisms and environments that are normal today and react to them as if I was a person from the future. In this scenario, the glitched portions would not exist in the future and thus the person reacting would try and guess what it could be. The purpose of these reactions would be to make people realize they shouldn't take what we have for granted.

I started with the idea of a girl in the present day who travels a lot and likes to post pictures, then I added second character to react to her so we get more narrative from a different point of view. The concept is a robotic personality posting from the future who reacts to and tries to decipher posts of objects that no longer exist from a cheerful girl of the past . Intentional glitches were created through the sound editing program Audacity by translating code into waveform and then applying different effects to selected portions of the audio. The audio was then exported back into jpegs and viewed to see what kind of changes took place. Despite the variety of effects used (Echo, Bass and Treble, Reverb, and Invert to name a few) and size of affected sections applied to the waveform, the glitches made with Audacity came out looking very similar. For me, audacity is the least reliable form of glitch making from today's lessons because the images don't load half the time. The original image is on the far left.

A series of images was modified with text programs and saved to create intentional glitches. Part of the difficulty in creating a glitch is that images have different responsiveness to the programs, so you might intend for a certain effect but not get the desired result. Although it is difficult to understand how glitches respond to changes in their code, one pattern I've noticed is that blocks of color form when code is deleted or when in-line code is interrupted. For Notepad++, the section of code seems to respond to the vertical location of the image, so not messing with the top of the code might be why the top of an image undisturbed. Hexed.it is harder to understand since replacing and deleting the code may not leave any visible changes. Notepad++The original image is displayed on the far left. Hexed.it The original image is displayed on the far left for each set.

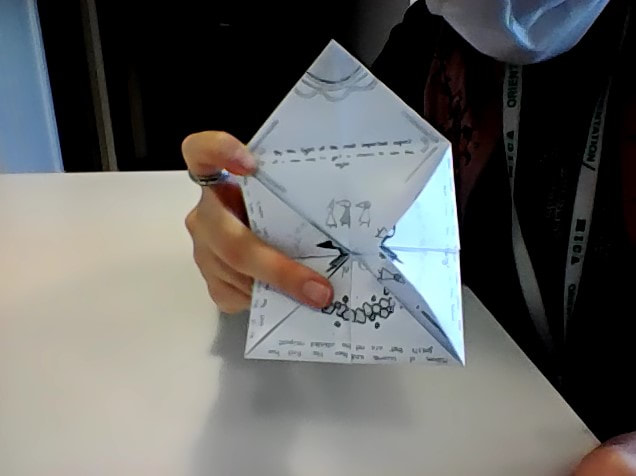

Article: "'The Most Expensive GIF of All Time' Is Being Sold for $5,800'" I think Michael Green makes a good point in that the rise in digital art is the direction of future artwork. Digital art has so much potential in changing the creator’s and the viewer’s experience, and we already see its usage in gaming, animation, and education. The use of technology for VR and AR has a wide variety of uses for different media, and we have developed programs such as Photoshop that allow us to create and distort images that wouldn’t be possible otherwise. The digital field is open for exploration to discover new methods and programs to create images. However, I don’t think digital art is the end-all-be-all. Personally, I like to mix up whether I want to create something analog, digital, or both. An example where both analog and digital techniques were used to compose a greeting card, 2021 Green’s dream of a digital era seems to be realized with the rise of non-fungible tokens (NFTs) and how the popularity of a market platform can lead to a rise in the presence and popularity of digital art. Support from a growing audience and increased presence in a well-known market has helped digital artists become more visible. However, the NFT market is a prime example of the digital market mimicking the traditional one, in which an art piece becomes a collectible token rather than

With the rise of a more viable market for digital artwork, the issue of regulation for digital mediums has become more prominent. Along with the problem of impersonation, other issues include energy consumption, blockchain storage, and the future of the market in which traced or low-effort tokens become mainstay. Due to the growth of activity on the Internet, higher levels of regulation are required to stop the misuse and impersonation that NFTs were supposed to prevent. Article: "Gauging the Environmental Impact of NFTs"

As for the shift into digital art, unlike Green, I do not believe that physical museums are dead. The great thing about museums and galleries is the physicality and movement you experience when walking through different areas of the building; the museum is more than just a visual spectacle but

If you consider the use of projections and effects in concerts, esports, competitions, and experiences, the physical sphere is just as important as the digital one. Digital art and methods even allow for physical reproduction, such as posters and 3D printing. Although the digital market is profitable, an original, limited piece is still valued higher than its digital counterpart. Example of one a performance combining performance and digital art and effects, a particular favorite at 2:32 mark Source: "GIANTS - Opening Ceremony Presented by Mastercard | 2019 World Championship Finals", YouTube, Uploaded by League of Legends, 10 Nov 2019, https://www.youtube.com/watch?v=WFkZjTCxVPc Rather than separating the two, I think physical and digital art should exist in harmony. I want both a screen background and a poster of the art I like. Digital forms allow us to edit and make art more accessible, but the physical form also allows us to enjoy its original form. All in all, digital art is a playing field for people to try out new things and experiment. However, this doesn’t mean traditional art is any less valued. Beauty is in the eye of the beholder after all. |

Archives

May 2022

Categories

All

|

RSS Feed

RSS Feed